Munich-based, globally connected

We design non-verbal communication systems for products that interact with people — feedback, presence, adaptive behavior.

Get in touchA complete UX sound system where audio cues replace visual menus — "Drive-By-Ear." Every interaction state has a distinct sonic signature, from media and navigation to climate control. Eyes on the road, ears on the interface. Presented at Paris Mondial de l'Auto 2022.

A non-verbal communication system for a surgical imaging robot — distinct sound signatures for movement types, radiation intensity levels, and system states. Replacing generic audio cues that were confused with patient monitors, reducing cognitive load and allowing surgeons to keep their attention on the patient.

Brand-specific start-up and shut-down sounds in Dolby Atmos for Harman Kardon, a UI sound system, and an atmospheric sound layer bridging silence to music. The project evolved into a redefinition of the Harman Kardon sonic brand identity.

Systems that generate sound combinatorically from contextual parameters — weather, time of day, location, and user state — instead of playing static files. Developed across multiple prototypes and industry collaborations, this line of research leads directly to CORPUS Reef — our real-time generative sound model.

Sound strategy, adaptive audio systems, and generative AI for products that interact with people. We work across the full arc — from defining a product's sonic identity to shipping production-ready systems.

A product's complete sound language — feedback, notifications, ambient presence, sonic branding — designed as one coherent system. Delivered as production-ready assets in Stereo, Dolby Atmos, MPEG-H, Auro 3D. Middleware-integrated.

State-based sound architectures mapping system logic to parameterized audio behavior in real time. Situation-adaptive audio, ambient states, driving dynamics, user state. Built with FMOD, Wwise, custom engines. Edge-deployable.

Certified R&D lab (BSFZ Forschungszulage). Context-sensitive sound generation, interaction models, generative audio for industrial applications. We help partners structure joint R&D proposals for public funding. Our research program →

Sound aesthetics and use case design. Multiple approaches, narrowed to one in dialogue with the client.

Built and tested in our Immersive Sound Stage (ISS) — a modular multi-speaker environment for spatial audio prototyping.

User testing in simulated environments. Measuring emotional response, cognitive load, and brand coherence.

Production-ready assets in all major formats (Stereo, Dolby Atmos, MPEG-H, Auro 3D). On-device mastering and technical integration supervision.

Not a collection of sound files — a semantic system. A layer that interprets context, behavior, and environment, and translates them into meaningful sonic responses. CORPUS Reef generates the sound in real time — controllable and expressive. CORPUS provides the training data: legally compliant and musically distinctive.

Adaptive audio systems can't scale with static sound libraries — every additional context parameter multiplies the number of required variants exponentially. Generative AI is the only path — but it needs training data that is legally compliant and musically authentic. Scraping the internet is not an option.

CORPUS is the licensing and royalty protocol that makes Reef possible. Musicians contribute, keep their rights, and receive royalties based on the value their work creates. For partners who define their brand through musical authenticity, this matters: the provenance is real.

corpus.musicOur background is in composition, theatre, and interactive media — but also in commercial production, brand communication, and industrial events. That combination is why our work sounds different. We bring artistic judgement with a clear awareness of brand, product, and delivery.

Designing a product's sonic identity is character design. Making that identity respond to context in real time is adaptive composition. Both require artistic judgement — applied with engineering discipline.

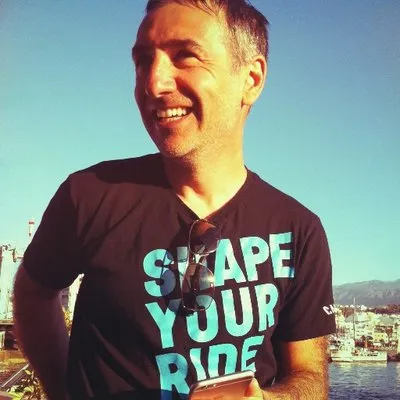

Founded in 2017 by Mathis Nitschke, composer and sound designer with three decades of experience across film scoring, theatre, game audio, commercial production, and immersive installations. Since 2019, the studio has focused on auditive interaction for industrial partners — combining artistic practice with research in psychoacoustics, spatial audio, and generative models.

Most of our team members are interdisciplinary by nature — engineers who are also artists, researchers who compose. Our core team brings decades of combined experience in adaptive and interactive music, game audio, and generative systems. Projects delivered in automotive, medical technology, and consumer electronics. See our research and experimental projects →